How AI Is Transforming the Modern Data Warehouse

Why AI Is Redefining Enterprise Data Warehousing?

Enterprise data warehouses are under more pressure today than at any point in their history. Data volumes are growing faster than IT teams can model them. Business users expect real-time dashboards instead of overnight reports. AI and machine learning workloads demand clean, structured, well-governed data on tap. And self-service analytics is no longer a perk—it is a baseline expectation across finance, marketing, supply chain, and product teams.

The traditional data warehouse was never designed for this. It was built for batch loads, predictable schemas, and a small group of trained analysts. The shift toward AI-driven decision-making is forcing a fundamental rethink of how data is stored, prepared, governed, and consumed. This article looks at why legacy data warehouses are struggling, where AI is already reshaping the stack, what enterprises need to do to make their data truly AI-ready, and the risks leaders must plan for along the way.

Why Legacy Data Warehouses Need Modernization for AI Workloads

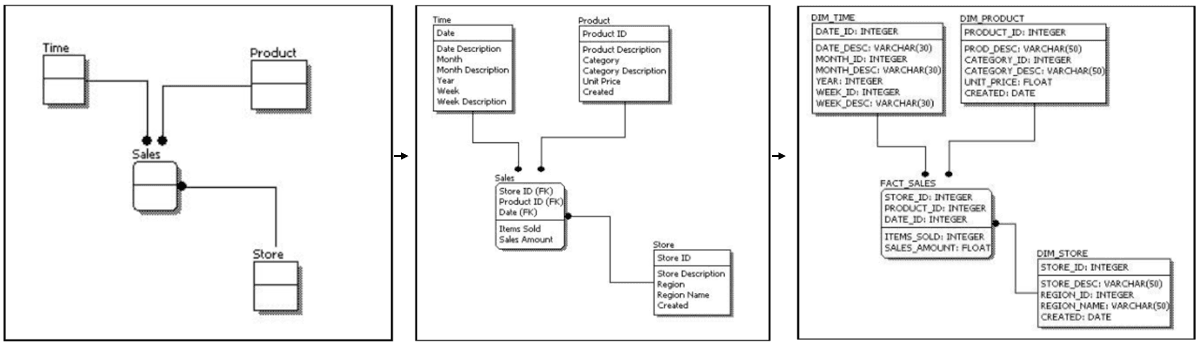

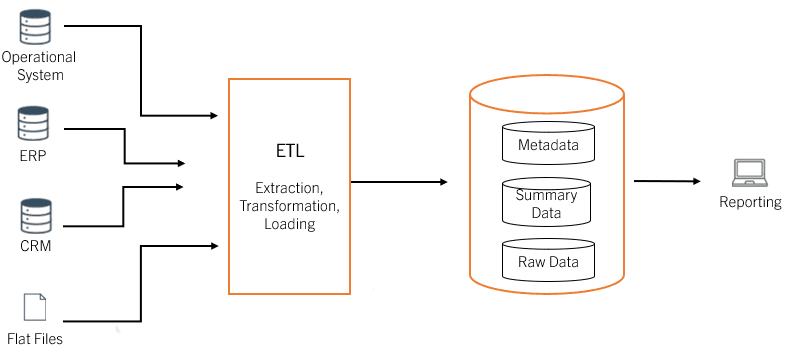

Most legacy data warehouses share a common architecture: nightly batch ETL jobs, rigid star or snowflake schemas, and reporting cycles measured in days or weeks. That model worked when business questions were predictable and data sources were limited to a handful of operational systems. It does not work when an enterprise is integrating clickstream data, IoT telemetry, third-party APIs, and unstructured text—often in the same query.

Several limitations are now hard to ignore. Batch-heavy pipelines create latency between when an event happens and when it becomes available for analysis. By the time the marketing team sees a drop in conversions, the campaign is already underperforming. Manual data preparation consumes the majority of analyst time, with cleansing, joining, and reconciling data across systems scaling poorly. Slow reporting cycles mean decisions are made on stale information; in fast-moving categories such as e-commerce, logistics, or financial services, a 24-hour lag is a competitive disadvantage.

Limited schema flexibility makes it difficult to onboard new data sources without lengthy modeling exercises. Cost and scale issues emerge as data volumes grow—on-premises warehouses force capacity planning years in advance, while older cloud setups often lack workload elasticity. The combined result is a system that consumes engineering effort, frustrates business users, and cannot keep up with AI workloads that demand fresh, well-structured data in volume.

How AI Is Transforming the Modern Data Warehouse Stack

AI is not just another workload running on top of the warehouse. It is changing how the warehouse itself operates. Six areas stand out.

-

Predictive Analytics and Forecasting

Machine learning models embedded in or alongside the data warehouse turn historical data into forward-looking insight. Customer churn, demand forecasting, inventory optimization, and price sensitivity can be modeled directly on warehouse data, removing the need to extract information into separate ML environments. This shortens the path from data to decision and keeps models close to governed, trusted sources—a meaningful advantage when audit and explainability matter.

-

Automated Data Quality, Cleansing, and Schema Drift Detection

Clean data is good data. AI models can detect anomalies, missing values, duplicate records, and schema drift automatically. For high-volume datasets such as transactional records, product catalogs, customer feedback, and IoT readings, this reduces manual cleansing effort dramatically. Algorithms identify outliers, flag suspect records, and suggest corrections, freeing data engineers to focus on architecture rather than cleanup work.

-

AI-Assisted SQL Generation for Faster Self-Service Analytics

Generative AI is changing how people interact with data. Modern tools can translate plain-English questions into SQL drafts, suggest joins, explain schemas, and help users explore data faster. However, production-grade use still depends on semantic models, access controls, query validation, and human review for critical decisions. Clean, trusted data is the foundation of reliable analytics and AI.

-

LLM-Powered Conversational Analytics and Natural Language Querying

Beyond SQL generation, large language models enable true conversational analytics. A product manager can ask, "Which regions saw the highest order cancellations last quarter, and what were the top reasons?" and get a structured answer drawn directly from warehouse tables. Chatbots built on this pattern serve both end customers—through product recommendations and complaint handling—and internal teams looking for fast, context-aware insight. LLMs that support multiple languages also help global enterprises reach diverse audiences. Tarento's ProjectAnuvaad, an AI-powered Indic language translation platform, is one example of how language models extend the reach of data products.

-

AI-Driven Data Warehouse Performance Optimization

AI learns from past query patterns and dynamically tunes execution plans, caching strategies, and resource allocation. Workload prediction allows the warehouse to scale compute up or down automatically, matching demand without manual intervention. The result can be faster queries and better cost control, especially in cloud-native environments where compute is elastic and usage-based.

-

Anomaly Detection, Data Security, and AI-Based Threat Monitoring

Security is paramount. AI models monitor access patterns, query behavior, and data movement in real time, flagging anomalies that may indicate breaches, insider threats, or misconfigured pipelines. The same techniques support compliance by identifying when sensitive data is being accessed or shared outside policy, allowing security teams to respond before small issues become incidents.

Why AI-Ready Data Is Critical for Enterprise AI Success

Every AI initiative depends on the quality of the data underneath it. A model trained on inconsistent, ungoverned, or incomplete data will produce inconsistent, ungoverned, and incomplete answers—no matter how sophisticated the algorithm.

AI-ready data has a few defining characteristics. It is trusted, meaning lineage is documented, sources are verified, and definitions are consistent across the business. It is governed, with controlled access, masked or encrypted sensitive fields, and auditable usage. It is well-structured, with clear schemas, defined relationships, and metadata rich enough for both humans and models to understand. It is timely, flowing from source to consumption layer fast enough to support the use case. And it is complete, with critical attributes present, gaps documented, and edge cases handled.

Without these foundations, AI projects stall. Models drift, dashboards contradict each other, and business users lose confidence in the data platform. Enterprises that invest in AI-ready data early see faster ROI on every downstream initiative, from generative AI assistants to autonomous decision systems. The discipline pays off well beyond the first AI use case.

Key Risks in AI-Enabled Data Warehousing

Bringing AI into the data warehouse introduces new risks alongside new capabilities. Leaders need to plan for them deliberately rather than treating them as edge cases.

-

Privacy and data protection. Personal data flowing into AI models must be handled in line with GDPR, India's DPDP Act, and sector-specific rules. Pseudonymization, tokenization, and synthetic data generation are increasingly part of the toolkit for keeping AI workloads compliant without starving them of useful signal.

-

Access control. Role-based access works for traditional BI, but AI use cases need finer controls—row-level security, column-level masking, and dynamic policies that adjust based on the user's context and intent. The same dataset may need different exposure depending on who is asking and why.

-

Hallucination and unreliable outputs. LLM-powered analytics can produce plausible but incorrect answers. Without a grounding in trusted warehouse data and clear citation of sources, business users may act on fabricated information. Retrieval-augmented generation, query validation, and human-in-the-loop review are essential controls in any production deployment.

-

Lineage and explainability. Regulators, auditors, and internal stakeholders increasingly demand to know where data came from, how it was transformed, and which model produced a given output. Lineage tracking must extend from source systems through to model predictions, not stop at the warehouse boundary.

-

Compliance. Industry regulations across BFSI, healthcare, and the public sector impose requirements on data residency, retention, and consent. AI workloads must operate within these boundaries, not around them.

-

Poor-quality training data. Models trained on biased, outdated, or incomplete data will amplify those problems at scale. Continuous data quality monitoring is no longer optional—it is a core part of the AI operating model.

Each of these risks is manageable, but only with a deliberate architecture and governance framework. Bolting AI onto an ungoverned warehouse is a fast path to expensive mistakes.

How Tarento Helps Enterprises Build AI-Ready Data Warehouses

Tarento works with enterprises to modernize their data warehouses and make them AI-ready, end-to-end. Through our Data and Analytics practice, we support organizations across the full lifecycle of a modern data platform.

We help assess the current state, define the target architecture, and design cloud-native data platforms on Azure, AWS, GCP, or Snowflake that support both analytics and AI workloads at scale. Cloud data platform readiness is the foundation on which everything else stands.

Moving from legacy on-premises warehouses to modern cloud platforms is complex. Our data migration teams handle schema conversion, ETL/ELT modernization, validation, and cutover with minimal business disruption, drawing on patterns proven across BFSI, retail, public sector, and telecom engagements.

We implement governance frameworks covering data quality, lineage, access control, cataloging, and compliance—building the foundation that AI initiatives depend on. Governance is not a slowdown; done well, it is what allows AI to scale safely.

Finally, we bring AI capability directly into the data platform, from embedding ML models into the warehouse to building LLM-powered analytics interfaces. ProjectAnuvaad, our Indic-language translation initiative, is one example of how we apply AI to real-world enterprise and societal challenges.

The goal is not just a faster warehouse. It is a data foundation that supports analytics, AI, and self-service across the enterprise—governed, trusted, and ready for whatever comes next.

Building a Future-Ready Data Warehouse for AI, Analytics, and Self-Service BI

AI, real-time expectations, and the demand for self-service analytics are reshaping the traditional data warehouse. Enterprises that treat this as a routine upgrade will fall behind. Those that invest in AI-ready data, modern cloud architectures, and strong governance will unlock new levels of efficiency, insight, and competitive advantage. The opportunity is significant—and so is the work required to capture it.

Explore how Tarento helps enterprises manage data pipelines and warehouse operations, and accelerate legacy-to-cloud data platform migration with DataVolve.